FUSE AI Agents 2026: Plan/Scratch for Reliable Reasoning

Key takeaways

- Externalizing long-context via plan.md, scratch.md, decisions.json, and trace.log stabilizes multi-step reasoning and makes debuggability routine.

- FUSE-based mounts let agents operate with standard POSIX semantics (ls/grep/mv/append), reducing bespoke tool wrappers and improving reproducibility.

- MCP acts as the standardized bridge to enterprise systems while the filesystem provides a unified local workspace; together they enable governed, observable workflows.

- Local-first/on-prem deployments align with compliance and residency requirements; per-task mounts and path-level ACLs help enforce least privilege.

- Benchmarks are still early; treat performance claims as hypotheses and prioritize evaluation methods and observability.

Why files beat transient prompts for long-context

When plans, intermediate artifacts, and decisions are captured in files—plan.md, scratch.md, decisions.json, and trace.log—they become the ground truth for reasoning rather than a fading memory inside a token window. Files bring versioning, diffs, and checkpoints. You can review how a plan evolved, roll back bad branches, and reproduce a run from a known state. Think of it this way: the filesystem is the agent’s working memory you can audit, not an invisible prompt thread you can only guess at.

Jakob Emmerling’s early-2026 essay makes the conceptual case for “filesystem-first” agents, illustrating patterns like email-as-directories and POSIX read/write/list/move operations as natural agent actions. See the conceptual signal in “FUSE is All You Need – Giving agents access to anything via filesystems”. For a deeper look at why a governed context layer matters for agents, we’ve previously discussed architectural trade-offs in How LLM Agent Architectures Work.

The practical upside is reproducibility: files like decisions.json and trace.log give you deterministic traces of what happened and why. They also improve collaboration between engineers and agents—humans can read plan.md, edit a section, and let the agent continue. No special tool needed.

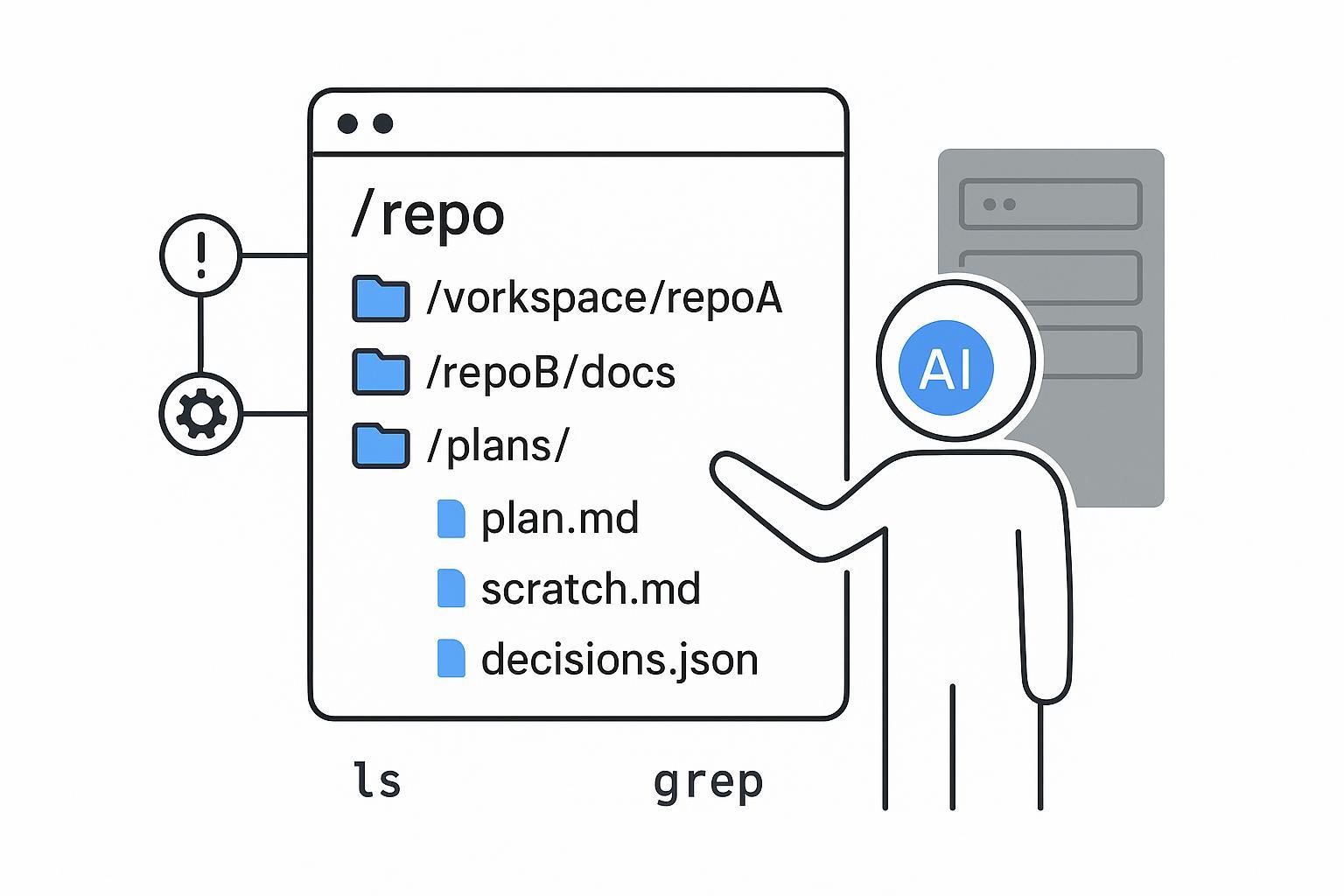

Running example — multi-repo engineering knowledge with plan/scratch workflows

Let’s anchor this in a concrete workspace:

- /workspace/repoA

- /workspace/repoB/docs

- /workspace/patterns

- /workspace/plans/plan.md

- /workspace/scratch/scratch.md

- /workspace/logs/trace.log

- /workspace/state/decisions.json

In this setup, agents use familiar POSIX ops:

- ls to enumerate repos and directories

- grep to search patterns across repos

- mv/cp to reorganize artifacts or promote scratch outputs

- echo >> and append to extend scratch.md or plan.md

- diff and patch to compare and apply changes across iterations

Lifecycle flow:

- Create or load plan.md with objectives and constraints.

- Iterate in scratch.md: try snippets, capture findings, and note blockers.

- Record decisions in decisions.json with rationale and timestamps.

- Log actions in trace.log with agent action IDs for auditability.

- Promote validated artifacts (docs, configs, code diffs) from scratch to the appropriate repo directories and open a PR via an MCP-exposed issue/PR tool.

Why does this work well for engineering knowledge across multiple repos? Because agents can “think” in files and operate across repos without bespoke adapters. The filesystem provides one abstraction; MCP servers expose external systems (issue tracker, CI, doc stores) through a standardized interface so agents don’t need a new wrapper for every tool.

Implementation patterns for FUSE AI agents and MCP interop

There are several viable ways to implement this pattern:

- Local-only FUSE with a SQLite-backed virtual filesystem: Suitable for developer machines and small pilots. Penberg outlines a design where the agent filesystem is backed by SQLite but mounted via FUSE, giving POSIX semantics so you can run git, grep, or ffmpeg directly. See the AgentFS proposal.

- DB-backed virtual FS (SQLite locally, Supabase or similar later): Start local, then migrate metadata and object storage to a managed DB for team workflows. Turso shows FUSE support for an AgentFS variant—mounting the SQLite-backed filesystem as a regular Linux FS so Unix tools run natively. Details in Turso’s “AgentFS FUSE” note.

- Object-store-backed AgentFS: For larger organizations, disaggregate storage (object store) and mount a virtual filesystem with caching. This enables scale-out reads and durable artifacts while keeping POSIX ops available to agents.

Where MCP fits: Treat MCP as the standardized bridge to external tools—issue trackers, CI, document stores, and internal services. The filesystem remains the local working substrate; MCP provides structured connectivity. MCP matured through 2025 with an anniversary spec update highlighting scopes and client security. See the one-year anniversary spec and the MCP authorization update. Anthropic’s engineering notes on code execution with MCP explain why moving intermediate computation outside the model, with sandboxing and least privilege, improves efficiency and control.

Editor adoption signals MCP’s momentum: JetBrains documents MCP integration so external clients (Claude Desktop, Cursor, etc.) can connect to IDE environments; see JetBrains MCP docs.

Governance, sandboxing, and compliance

Filesystem-first doesn’t mean free-for-all. You should design for least privilege:

- Per-task mounts: Mount only the directories the agent needs for a task. Sensitive sources get read-only mounts; scratch partitions are write-scoped.

- Path-level ACLs and audit logs: Every POSIX op should be logged with timestamps and agent action IDs. Align ACLs with business roles and tie audit trails to compliance reporting.

- OS-level controls: Use SELinux or AppArmor profiles, and consider Landlock for unprivileged filesystem access control. In containerized environments, enforce SCCs or use sandboxed containers to avoid broad capabilities like CAP_SYS_ADMIN in production paths.

Local-first/on-prem alignment helps with residency and compliance. Mount governed stores inside your network boundary; sync to object storage later if needed. Map MCP scopes to the same least-privilege model used at the filesystem layer.

Observability and evaluation

Stabilizing reasoning is only half the job; you also need to measure it.

- POSIX tracing: Log ls/grep/mv/append operations with agent IDs and timestamps. Diff plan.md versions between steps to visualize plan evolution.

- Core metrics: task success rate, latency, reproducibility (can we rerun and get the same outputs?), and auditability (complete action trails).

- Benchmark methodology: Compare a filesystem-first agent vs an API/MCP-only toolchain on a multi-step engineering task across two or more repos. Keep workloads identical; measure success rates and end-to-end latency; capture token usage and operator effort. Until public head-to-heads exist, frame results as pilot findings and share your harness.

For broader context on the “context layer” in agent systems, see Building a RAG Model That Scales.

Limitations and when APIs win

FUSE adds userspace mediation, which can increase CPU and latency compared to kernel filesystems. Heavy write or metadata‑intensive workloads may feel this more acutely. Some distributed filesystems mounted via FUSE relax POSIX guarantees, and daemon downtime affects metadata consistency. Mitigations include leveraging kernel page cache, batching writes, and confining agents to read‑mostly sources with write‑scoped scratch areas.

Streaming or transactional domains (e.g., high-frequency messaging, financial transactions) can still favor direct SDKs/APIs for performance and consistency reasons. Hybrid patterns are common: use the filesystem for plan/scratch/state and MCP for structured, transactional calls.

Where a context base fits (neutral mention)

Disclosure: Puppyone is our product.

A governed context base can underlie this architecture by storing enterprise knowledge as structured “Know‑How” (JSON/Graph), supporting hybrid indexing and deterministic retrieval, and deploying locally via Docker for privacy. In practice, teams can use a context base to mount curated, versioned knowledge into the agent’s workspace while MCP connects external systems. Puppyone’s site outlines local-first and MCP‑aligned context governance; see Puppyone’s Context Base. For adjacent concepts, explore How Agentic Process Automation Is Transforming Enterprise Operations in 2026.

Next steps and resources

If you’re evaluating this pattern, start small: pilot a local FUSE mount with plan/scratch files over two repos, add MCP connectors for PRs/issues, and instrument POSIX tracing. Define least-privilege mounts and path-level ACLs early.

Selected references:

- Concept framing: “FUSE is All You Need – Giving agents access to anything via filesystems”

- Implementation feasibility: AgentFS proposal (Penberg); Turso’s AgentFS FUSE update

- Tool/connectivity maturity: MCP one-year anniversary spec; Anthropic engineering on MCP code execution

- Editor adoption: JetBrains MCP integration docs

FAQs

Q1: Does FUSE overhead significantly impact agent performance?

FUSE adds modest latency, but agent workloads are typically read-heavy with bursty writes. Kernel page caching mitigates overhead after initial reads. Turso pilots show <10ms latency for engineering tasks like cross-repo grep—negligible versus LLM inference. Batch appends or RAM-backed scratch partitions handle write spikes. A 5–15% latency tradeoff is justified by deterministic traceability via trace.log and replayable workflows from plan.md checkpoints.

Q2: How do I start a filesystem-first pilot without infrastructure overhaul?

Pick a contained two-repo task (e.g., document an auth flow). Mount only those repos plus empty plans/, scratch/, and logs/ directories via fusepy or fuse-turso locally. Have the agent initialize plan.md, iterate in scratch.md, and log to trace.log. Add a minimal MCP connector for GitHub PRs. Run entirely on a developer laptop. Validate reproducibility and debugging gains within two weeks—no production changes required.

Q3: When should I avoid filesystem-first in favor of direct APIs?

Avoid filesystem-first for high-frequency streaming (real-time market data), ACID-critical transactions (payments), or stateless single-step tasks (URL summarization). These demand sub-millisecond latency or native transaction guarantees that FUSE can't provide. Hybrid patterns often win: use the filesystem for plan/scratch/state while routing latency-sensitive calls through scoped MCP tools. Rule of thumb: if you'd want to git diff plan.md tomorrow to audit a decision, filesystem-first adds value.